The First Skill AI Engineers Build

Control before optimization.

This is part of an ongoing series breaking down the AI Engineering Stack layer by layer. Today: Layer 1 — Foundational Environment.

The Demo Illusion

You built something that worked.

The output looked right. The model responded well. You ran it three times and it held up.

Then you shared it. Or deployed it. Or came back to it two weeks later.

And it broke.

Not dramatically. It just... stopped being reliable. Different outputs. A missing dependency. A model version that quietly changed. An environment that was never stable to begin with.

This is the demo illusion.

The system did not fail because the model was wrong. It failed because the conditions were never controlled.

Most builders assume reliability comes from better prompting. Better models. Smarter design.

It does not.

The first skill AI engineers build is not better prompting. It is controlling the conditions under which systems run.

What Reproducibility Actually Means

Most people hear “reproducibility” and think it sounds academic.

It is not.

It is the most practical discipline in AI engineering.

Reproducibility means one thing:

Given the same inputs, the same configuration, the same model version, and the same dependencies — your system produces the same predictable behavior.

Not approximately the same. Not usually the same. The same. Every time. Across environments.

This is not about perfection. It is about control.

When you can reproduce a result, you can debug it. You can improve it. You can hand it to someone else and trust they are running the same thing you built.

If you cannot reproduce it, you cannot debug it.

And if you cannot debug it, you are not engineering. You are guessing.

That distinction matters more than any model benchmark.

Why Learners Skip This Skill

Most people building with AI right now are focused on the same things.

Which model is best this week. What prompt gets the cleanest output. Which benchmark just dropped.

That focus is not wrong. It is just incomplete.

And it is exactly what keeps most builders stuck at the demo stage.

In Issue 1, we talked about the difference between AI learners and AI engineers. Learners optimize the model layer. Engineers think across the stack.

This is where that difference becomes concrete.

Learners focus on:

Model releases

Prompt tweaks

Benchmark comparisons

Engineers focus on:

Version control

Stability

Replayability

Traceability

Reproducibility gets skipped because it is invisible. It does not show up in a demo. It does not make a good LinkedIn post. It does not feel like progress when you are doing it.

But it is the foundation everything else is built on.

Skip it early and you build on sand. Every optimization you layer on top becomes fragile.

Why This Is Harder With LLM Systems

Traditional software is deterministic.

Same input. Same code. Same output. Every time.

LLM systems are not.

The model is probabilistic by design. Providers push silent updates. Temperature settings shift outputs. A prompt that worked in March may behave differently in May — not because you changed anything, but because the environment around it changed.

This creates a specific engineering challenge.

You cannot control the model completely. You never could.

Which means everything around the model must be more disciplined, not less.

Version control matters more. Logging matters more. Configuration tracking matters more.

The less predictable the core component, the more stable the surrounding system needs to be.

When outputs are unpredictable, your system cannot be.

This is not a reason to distrust LLMs. It is a reason to engineer around them deliberately.

That is what separates a fragile prototype from a production system.

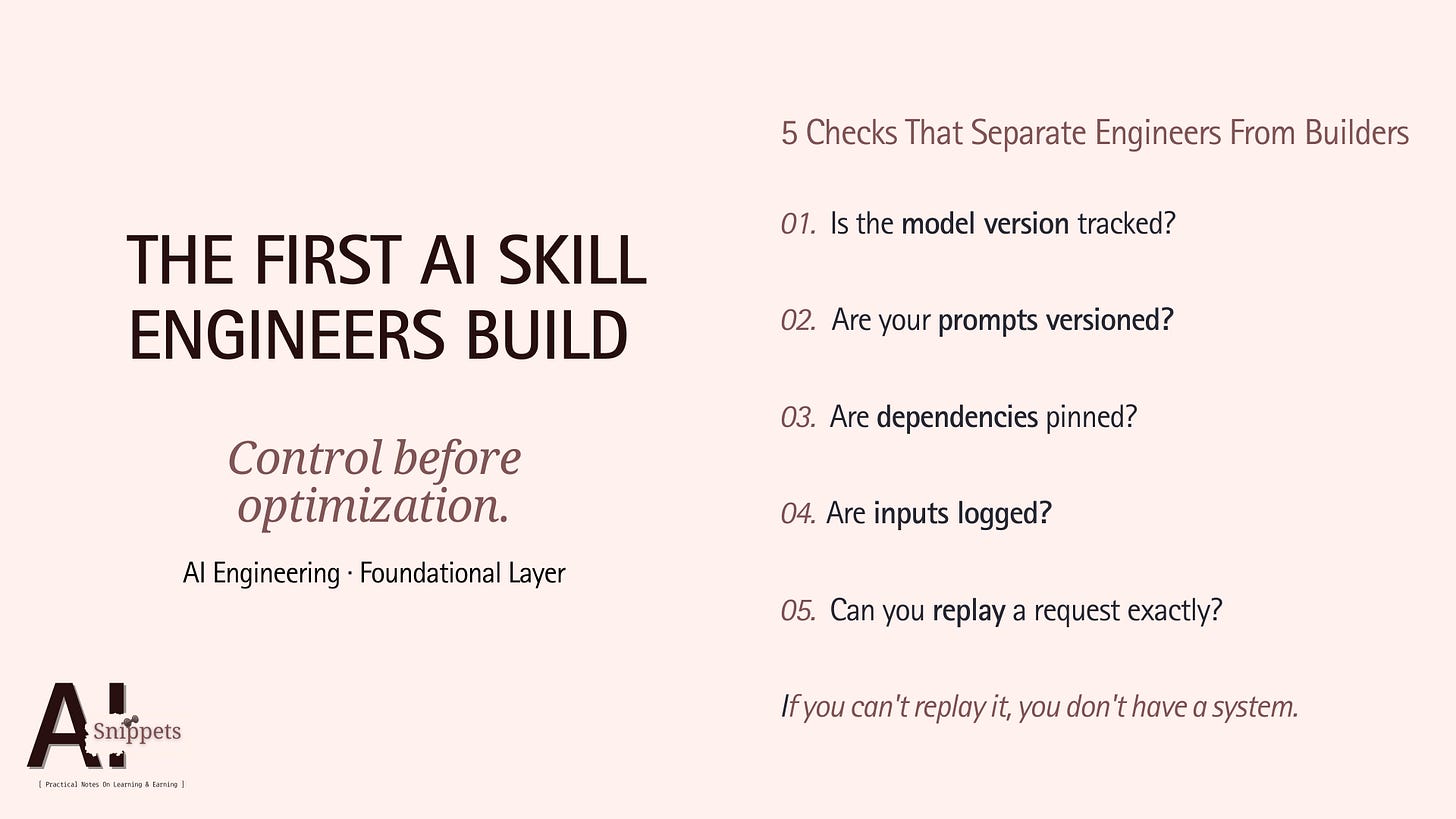

The 5 Checks Before You Touch the Model

Before you optimize performance, before you tweak prompts, before you chase better outputs — stop.

Run these five checks first.

1. Is the model version tracked?

If you are not pinning the exact model version, you are not controlling the experiment. Silent provider updates are real. Lock it down.

2. Are your prompts versioned?

Prompts are code. Treat them like code. If you cannot tell me what prompt was running last Tuesday, you cannot debug what changed.

3. Are dependencies pinned?

Library updates break things quietly. Pin your dependencies. Reproducibility starts at the environment level, not the model level.

4. Are inputs logged?

You cannot replay what you did not record. Log every input that enters your system. This is your audit trail and your debugging lifeline.

5. Can you replay a request exactly?

Take any request your system processed. Can you re-run it precisely — same model, same prompt, same input, same config — and get a consistent result?

If yes, you have a system.

If no, you have a demo.

These five checks cost nothing to implement. They change everything about how you build.

Why This Shows Up on Your Resume Before You Realise It

Anyone can call an API.

That is not a skill anymore. It is a starting point.

What separates junior builders from engineers who get hired, contracted, and trusted with real systems is not model knowledge.

It is system maturity.

Reproducibility is invisible to the end user. No one sees your version control. No one notices your pinned dependencies. No one applauds your logging setup.

But technical reviewers do.

When you walk into an interview or share a portfolio project, reproducibility signals one thing: you have built something you understood well enough to control.

That is rare.

Most AI portfolios are collections of demos. Impressive outputs. No infrastructure. No traceability. No discipline underneath.

Yours does not have to be.

The engineers who get trusted with production systems are not always the most creative. They are the most reliable.

Reliability is a skill. And it starts here.

Foundations Do Not Shine. They Hold.

Nobody notices a stable foundation.

That is the point.

The best-engineered systems are not the ones with the most impressive outputs. They are the ones that run the same way on Monday as they do on Friday. In your environment and in someone else’s. In a demo and in production.

That is what foundations do.

They do not create visible brilliance. They create reliability.

And reliability is what gets you from building things to engineering systems. From experimenting to shipping. From interesting to trusted.

If you take one thing from this issue:

Before you touch the model, control the conditions.

Version it. Pin it. Log it. Replay it.

Build the foundation first. Optimize everything else second.

Next week →

Next week, we go one layer up.

Issue 3 takes us inside the Model Layer — the part of the stack everyone obsesses over. Most builders spend 80% of their time here. Most engineers spend 20%.

We’ll break down why and where the other 80% actually goes.